Few-Shot Visual Relationship Co-Localization

Revant Teotia*, Vaibhav Mishra*, Mayank Maheshwari*, Anand Mishra

* : Equal Contribution

Indian Institute of Technology, Jodhpur

ICCV 2021

[Paper][Code][Supplementry Material][Slides][Poster][Short Talk]

Abstract

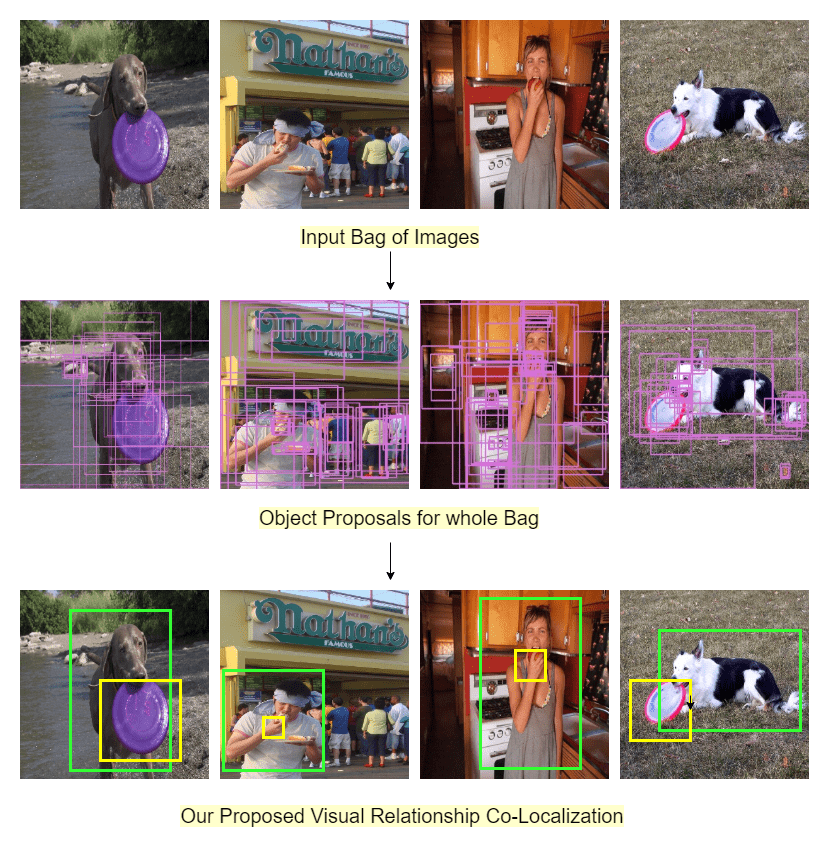

In this paper, given a small bag of images, each containing a common but latent predicate, we are interested in localizing visual subject-object pairs connected via the common predicate in each of the images. We refer to this novel problem as visual relationship co-localization or VRC as an abbreviation. VRC is a challenging task, even more so than the well-studied object co-localization task. This becomes further challenging when using just a few images, the model has to learn to co-localize visual subject-object pairs connected via unseen predicates. To solve VRC, we propose an optimization framework to select a common visual relationship in each image of the bag. The goal of the optimization framework is to find the optimal solution by learning visual relationship similarity across images in a few-shot setting. To obtain robust visual relationship representation, we utilize a simple yet effective technique that learns relationship embedding as a translation vector from visual subject to visual object in a shared space. Further, to learn visual relationship similarity, we utilize a proven meta-learning technique commonly used for few-shot classification tasks. Finally, to tackle the combinatorial complexity challenge arising from an exponential number of feasible solutions, we use a greedy approximation inference algorithm that selects approximately the best solution. We extensively evaluate our proposed framework on variations of bag sizes obtained from two challenging public datasets, namely VrR-VG and VG-150, and achieve impressive visual co-localization performance.

Highlights

- Introduced a novel task of Visual Relationship Co-Localization (VRC).

- Posed VRC as a labelling problem, and propose an optimization framework to solve it.

- Used meta-learning based approach to colocalize visual relationships on bags of images.

Bibtex

Please cite this work as follows::

@InProceedings{teotiaMMM2021,

author = "Teotia, Revant and Mishra, Vaibhav and Maheshwari, Mayank and Mishra, Anand",

title = "Few-shot Visual Relationship Co-Localization",

booktitle = "ICCV",

year = "2021",

}